The PEAK Threat Hunting Framework: Full Guide and Examples

Threat hunting has evolved far beyond ad-hoc log digging and reactive incident response. As attackers adopt stealthier techniques, security teams need a structured way to search for what automated tools might miss.

The pressure is real: the 2026 CrowdStrike Global Threat Report highlights a 42% increase in zero-day vulnerabilities exploited before public disclosure, while Statista projects the global cost of cybercrime will reach $13.82 trillion by 2028.

That's where the PEAK Threat Hunting Framework comes in. It offers a practical, repeatable approach that turns hunting into a measurable, ongoing program rather than a series of one-off efforts. Designed to work across SIEM, EDR, and data lake environments, PEAK brings structure to proactive defence. In this article, we explore how it works and how to apply it in real-world scenarios.

Introducing the PEAK Threat Hunting Framework

The harsh reality for security teams is that signature-based defences simply can't keep up with the slow-moving, highly adaptable adversaries who've found ways to evade traditional detection for weeks or even months.

The PEAK threat hunting framework, a.k.a. "Prepare, Execute, Act with Knowledge," has emerged as a modern, vendor-agnostic approach that aims to make threat hunting repeatable, measurable, and in sync with the complex attack patterns seen in recent years.

PEAK is specifically designed for the resource-strapped security operations center teams and threat intelligence analysts who're drowning in alert fatigue while simultaneously dealing with the stealthy threats from APT groups, ransomware operators, and supply-chain attackers.

These adversaries have managed to bypass automated defences, making a proactive approach essential. Rather than waiting for alerts that might never happen, threat hunters using PEAK actively go out and search for unknown intrusions using a structured and documented threat hunting process.

Now, there's a big difference between reactive incident response and proactive hunting, and it's a fundamental one. Incident response teams leap into action after something's been detected, but what happens when the intrusion never triggers an alert? That's where the PEAK framework comes in, offering a practical, step-by-step structure rather than just abstract theory.

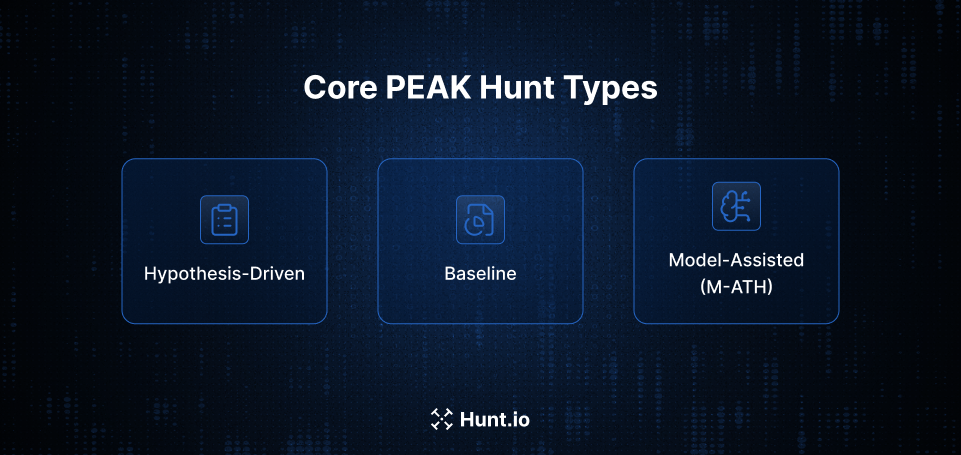

It brings together three different types of threat hunting: hypothesis-driven, baseline, and model-assisted, into a single lifecycle that works across SIEMs, data lakes, and EDR/XDR platforms.

Now, before diving deeper into PEAK itself, it helps to step back and understand where threat hunting frameworks fit in the first place. Why do organizations need them, and what problems are they actually solving for security teams?

The Importance of Threat Hunting Frameworks

A threat hunting framework is a documented, repeatable process for planning, running and operationalizing hunts across an organization. Without one, hunting expeditions tend to be hero-driven activities that rely on individual expertise and rarely produce lasting value. With a framework, organizations turn one-off investigations into a strategic program with consistent documentation, actionable metrics, and clear hand-offs to SOC and IR teams.

Frameworks take scattered efforts and turn them into measurable outcomes. Instead of asking "did we find anything?" leadership can track metrics like dwell time reduction, new detections created per hunt, and misconfigurations remedied. Findings get fed into detection engineering pipelines rather than getting lost in analyst notebooks.

The evolution of hunting programs reflects changing threat landscapes. Early frameworks from a few years back established the hypothesis loop concept, but today's organizations are facing challenges that those approaches didn't anticipate: cloud infrastructure, SaaS applications, remote work environments, and living-off-the-land techniques. Modern threat actors are abusing legit tools, persisting on edge devices, and exploiting identity systems in ways that demand updated hunting approaches.

If no framework exists, you can pretty much bet on the following pain points:

Hunts aren't recorded, so when analysts leave, all that knowledge just disappears.

Different hunters are doing the same work without even realizing it.

Findings never turn into automated detections, so you're stuck doing the same investigation over and over again.

Executives view hunting as "a nice to have" rather than a strategic control.

PEAK is designed to close those gaps: it's recent, it's iterative, and specifically focused on closing the loop between hunting, detection engineering, and resilience improvement.

So what does that actually look like in practice? The easiest way to understand PEAK is to look at its core structure and how the framework organizes a hunt from start to finish.

The PEAK Framework at a Glance: Prepare, Execute, Act with Knowledge

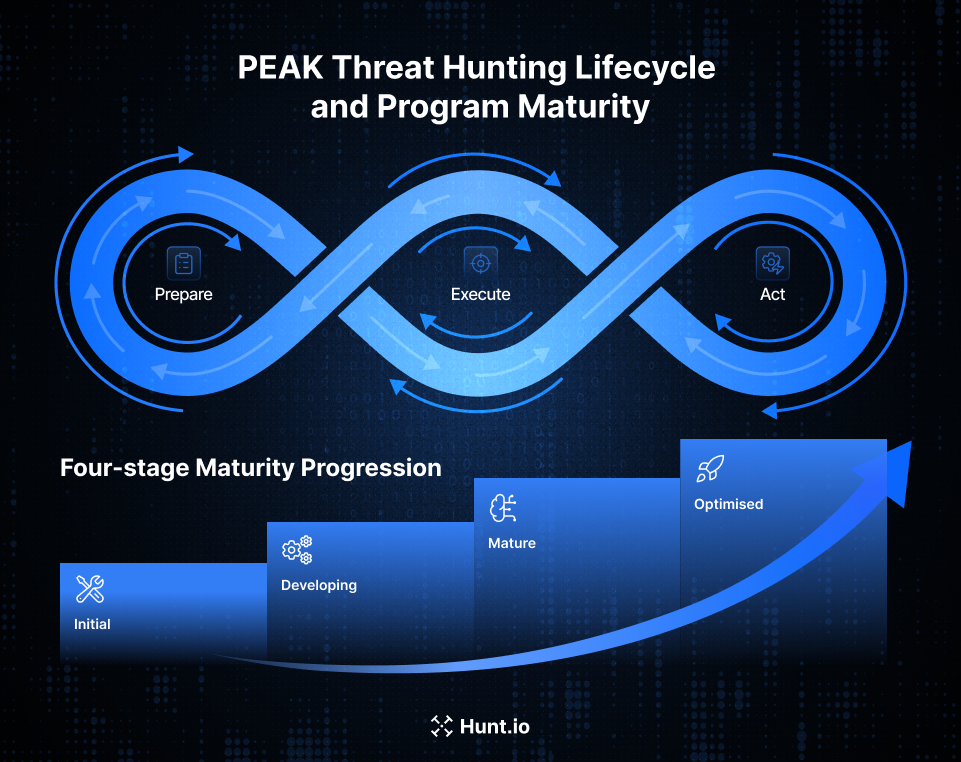

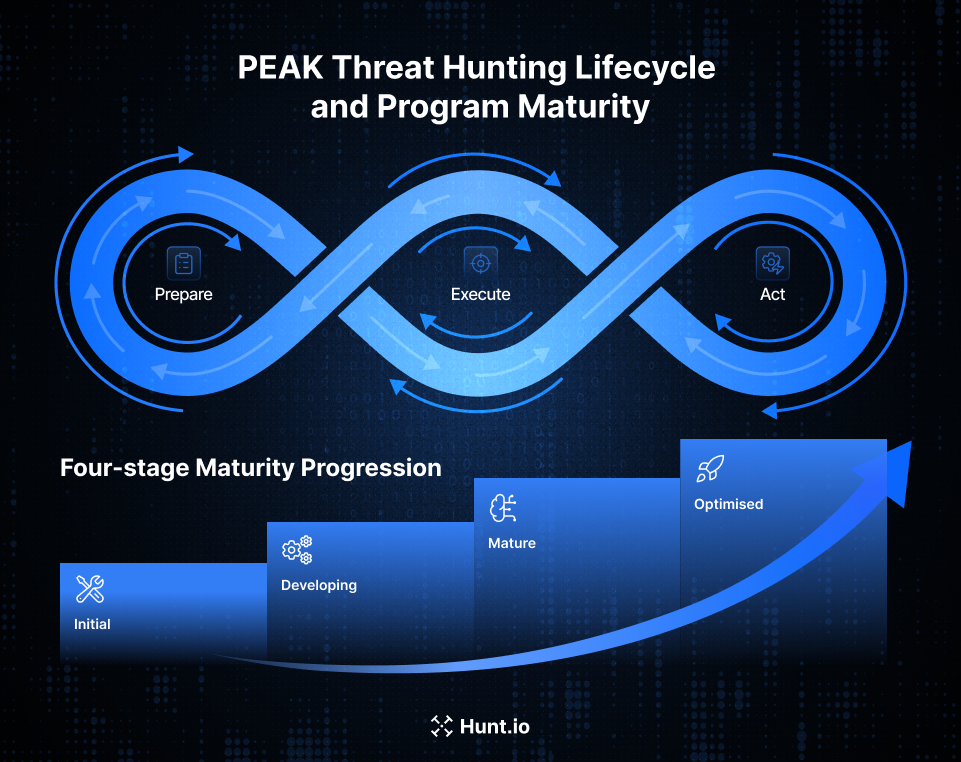

The PEAK framework is a continuous loop with three main phases flowing into each other. "Each PEAK hunt follows a three-stage process: Prepare, Execute, and Act" as stated by its creator, Splunk.

Prepare leads into Execute, which leads to Act with Knowledge, which in turn feeds back into the next Prepare cycle. Knowledge runs throughout the whole thing, informing every step rather than existing as a separate component.

Each phase has a distinct purpose:

Prepare: Figure out what to hunt, choose your data sources, and scope the activity so you're spending time on the risks that matter rather than just random exploration

Execute: Analyze data, test your hypotheses and identify malicious or risky behaviour through a structured investigation

Act with Knowledge: Take your findings and turn them into detections, playbooks and improved controls that outlast the individual hunt

Knowledge integration sets PEAK apart from simpler approaches. This knowledge comes from threat intelligence, internal incident history, red-team exercises and lessons learned from previous hunts. Every iteration builds on what you've learned rather than starting from scratch.

Flexibility is at the heart of the PEAK design. Threat hunters can iterate within the Execute phase, narrow or expand scope mid-hunt and pivot to new hypotheses without having to start the whole framework over again. That reflects the reality that investigations don't usually go in straight lines: leads pop up, assumptions get proven wrong, and a fresh perspective often reveals some unexpected patterns.

PEAK was built to support three complementary hunt types: hypothesis-driven, baseline and model-assisted, so security teams can start simple and grow into more advanced threat hunting.

Organizations don't need to have machine learning capabilities from day one: they can start with hypothesis-driven hunts and mature into model-assisted hunts as their programs develop. Reviewing the Threat Hunting Maturity Model is a helpful way to understand that progression.

Within that lifecycle, PEAK supports several different ways of approaching a hunt. Each method fits different levels of data availability, tooling, and team maturity.

Core PEAK Hunt Types: Hypothesis, Baseline, and Model-Assisted

Instead of telling you how to hunt, PEAK sets out three hunt patterns that you can use, mix, and match, depending on what resources you've got available, how much data you're working with, and what specific objectives you're trying to achieve.

| Hunt Type | Best For | Key Requirement |

|---|---|---|

| Hypothesis-Driven | Investigating specific threat intelligence | Clear, testable statement about adversary behaviour |

| Baseline | Understanding normal activity and surfacing anomalies | Historical data to establish patterns |

| Model-Assisted (M-ATH) | Scaling analysis with automation | Machine learning capabilities or UEBA |

All three hunt types go through the same PEAK phases: Prepare, Execute, Act with Knowledge, so even though the methods vary, the program stays consistent. This means organizations can track hunts uniformly, no matter what approach they're using.

Hypothesis-Driven Hunts

Hypothesis-driven hunts are the classic approach to threat hunting.

You start with a specific, testable statement about what the enemy is up to, such as: "If an attacker has compromised an MFA-fatigued account, then they will use OAuth token grants in Microsoft 365 within the last 14 days."

During the Prepare phase, threat hunters:

Turn threat intelligence or incident reports into precise hypotheses.

Pick the right data sources (like identity provider logs, cloud audit logs, and VPN logs).

Scope the timeframe and environment segments (focusing on high-value admin accounts, for example).

The Execute phase uses structured queries, pivoting, and correlation to support or refute the hypothesis.

A practical example might involve searching for unusual OAuth consent grants or high-risk sign-ins in Azure AD, then checking to see if any of those events match up with impossible travel indicators or atypical user agents.

In the Act phase, you take validated hypotheses and turn them into:

New SIEM detection rules for abnormal consent grants.

Updated IAM policies and just-in-time access rules.

Playbooks that guide analysts when they spot similar patterns again.

This approach is best suited to teams that already have some experience and have threat intelligence feeds and incident post-mortems already up and running

It gives a focus and a rigour that means hunting time is spent on known enemy behaviours, rather than just random exploration.

Baseline Hunts

Baseline hunts try to establish what normal activity looks like for a particular behaviour, and then see where the outliers are.

Think of it this way: what's typical admin access to our domain controllers look like over 90 days, and what falls outside that?

Preparation for baseline hunts involves:

Choosing the behaviour and scope (all privileged sign-ins to domain controllers for a specific quarter).

Picking broad telemetry sources (like authentication logs, network flow records, or Zeek conn.log).

Deciding on statistical or heuristic markers of normal (like typical source IP ranges, business hours, usual protocols).

During execution, you aggregate counts and distributions and look for the top-talkers, usual ports, and standard geographic locations.

Any deviations become investigation leads: new services appearing suddenly, rare destination addresses, or off-hours activity spikes that break established patterns.

The Act phase turns baseline findings into:

Anomaly-based detections (like "new admin workstation connecting to DC after midnight").

Updated access control lists, firewall rules or jump-box policies.

Seeds for future hypothesis-driven hunts to dig deeper into suspicious deviations.

A concrete example: if you establish the baseline of SSH traffic from network devices, and find that routers only ever connect to internal management systems, but then baseline analysis shows an SSH session to an external IP, that's a deviation that needs investigating and might become a permanent detection rule.

Model-Assisted Threat Hunts (M-ATH)

Model-Assisted Threat Hunts use machine learning or analytics to pre-surface patterns, but human analysts are still essential for interpretation and validation.

This approach combines the structured investigation of hypothesis-driven hunting with the anomaly detection of baseline hunting, with a dash of ML automation on top.

Models can run the gamut from simple unsupervised clustering on authentication patterns to sophisticated supervised models trained on labelled attack data.

They might run in SIEM UEBA modules, data lake notebooks, or dedicated analytics platforms

Preparation for M-ATH requires:

Ensuring the right datasets (like process telemetry, DNS logs and proxy logs) are being consistently ingested and retained.

Picking which models or UEBA risk scores will guide the hunt.

Defining acceptable false-positive rates and triage workload guardrails.

Execution involves reviewing the highest-risk entities flagged by the models, then pivoting into raw logs to validate whether any anomalies match up with known TTPs.

A high-risk user score might indicate lateral movement, credential theft or data exfiltration, but only human analysis can confirm what the model has surfaced.

The Act phase for M-ATH might include:

Feeding confirmed malicious examples back into training datasets.

Converting repetitive high-confidence model outputs into deterministic detection rules.

Documenting model limitations so hunters understand where human judgment needs to step in.

M-ATH is the most technologically sophisticated of the PEAK hunt types, suitable for organizations with the data and analytics expertise to make it work.

Understanding these hunt types is useful, but the real value comes from seeing how they move through the PEAK lifecycle itself. The framework becomes much clearer once you walk through how a hunt actually unfolds across the three phases.

Operationalizing the PEAK Framework Phases

Now let's see how to make each PEAK phase a reality within existing tools, whether that's a SIEM, EDR, NDR or SOAR platform. A real-world scenario about hunting for adversaries targeting backup infrastructure runs through all three phases, something that's been seen in recent security advisories.

When you operationalize each PEAK phase, you'll produce specific artifacts and involve defined roles. The framework gives you structure, without telling you which tech to use, letting teams implement PEAK regardless of their existing vendor stack.

Phase 1: Prepare

The Prepare phase gets everyone on the same page and makes sure you're tackling impactful risks, not just wading through log data for kicks. Time spent here will make the execution phase much more effective.

Here's what you need to do to prepare:

Define the hunt goal based on a real threat:

"Catch activity that's consistent with an actor deploying ransomware to backup servers."

"Reveal edge-device persistence similar to campaigns we've seen in recent government advisories."

Translate threat intel into something concrete and testable:

Use a pattern like Actor--Behaviour--Location--Evidence.

Example: "A ransomware operator (actor) disabling backup services and deleting shadow copies (behaviour) on Windows backup servers (location) within the last 30 days (evidence scope)"

Work out which data sources you need:

EDR telemetry from backup servers.

Storage appliance logs.

VPN logs for admin access.

Network telemetry at the data centre edge.

Make some scoping decisions:

When to stop looking (previous 30 or 60 days).

Which systems to investigate (production backup clusters and their management interfaces).

What constraints do you need to work around (performance impact, query cost in cloud SIEM).

The Prepare phase spits out a few super useful artifacts:

A short cheat sheet of what to do and when.

A list of permissions and access requirements.

Some pre-built queries or dashboards to speed up execution.

Don't skip this phase: it's what makes the difference between a successful hunt and a waste of time. People who skip this phase often end up wandering through log data without any clear idea of what they're doing.

Phase 2: Execute

The Execute phase is where you run the plan you made in the Prepare phase, and start drilling down into the interesting bits. This is where you run queries, look at anomalies, and decide whether to widen or narrow the search.

Typically, you start with broad queries to validate your assumptions: understanding normal backup admin logons and network paths for replication traffic. This helps you get the context before you drill down into the interesting stuff.

Once you have a lead, you start looking at deviations from the norm, like backup servers initiating RDP or SMB sessions to unusual hosts.

Investigative techniques include:

Visualizing logon failures and successes in a time-series chart.

Pivoting from one log source to another using common identifiers like hostnames or user IDs.

Labelling events as benign, suspicious, or confirmed malicious so you can see how you got there.

The Execute phase is iterative; you might start with one hypothesis, but as you dig deeper, you find new evidence, and your theory might change. And that's okay, even if you don't find anything malicious, confirming that your security controls are working is valuable too.

What you should get out of the Execute phase is:

Confirmed or disproved hypotheses with some actual evidence.

Some interesting patterns that you might want to follow up on next time.

A nice tidy pack of evidence (queries, screenshots, log snippets) ready for the Act phase to turn into something useful.

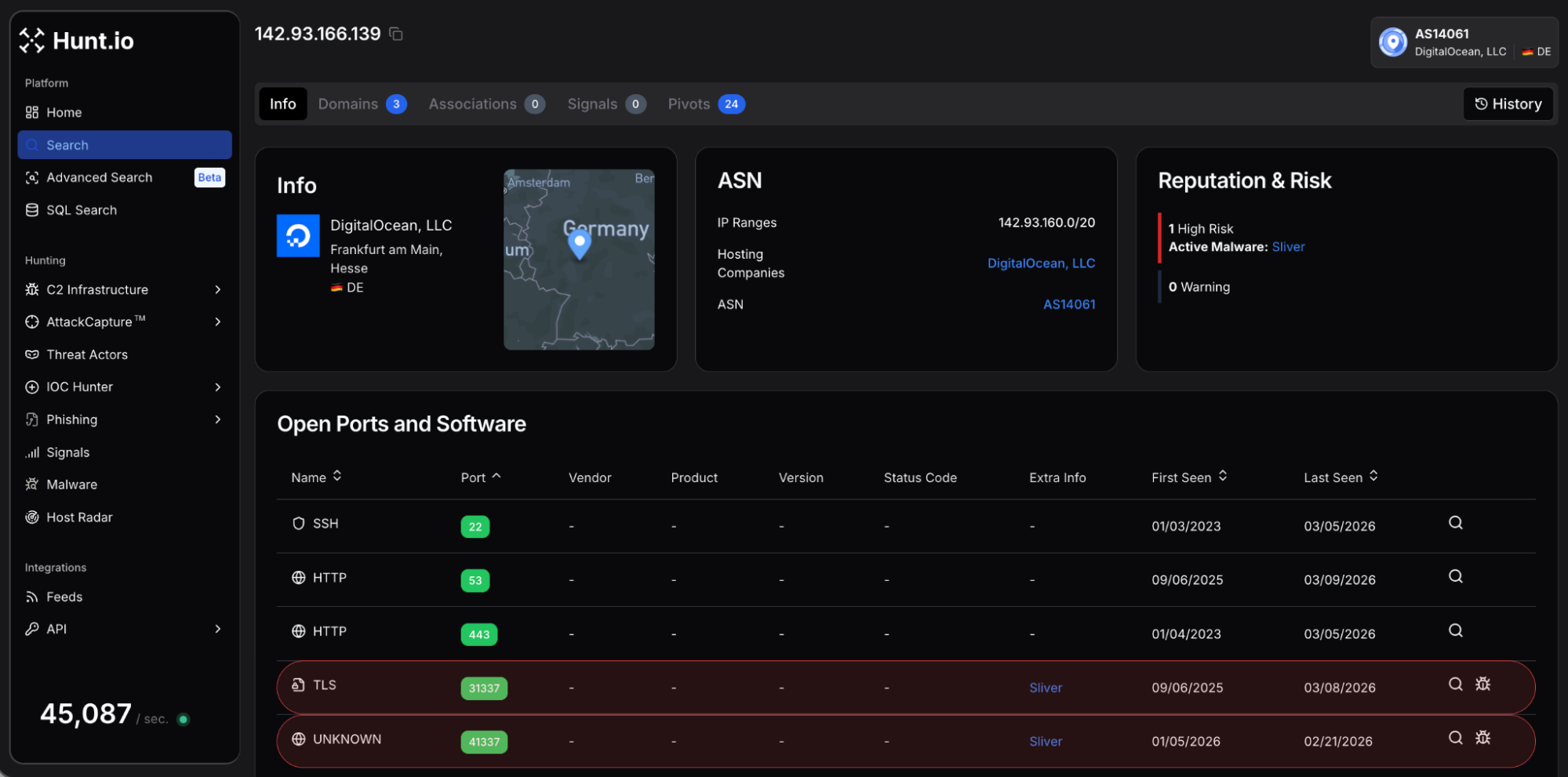

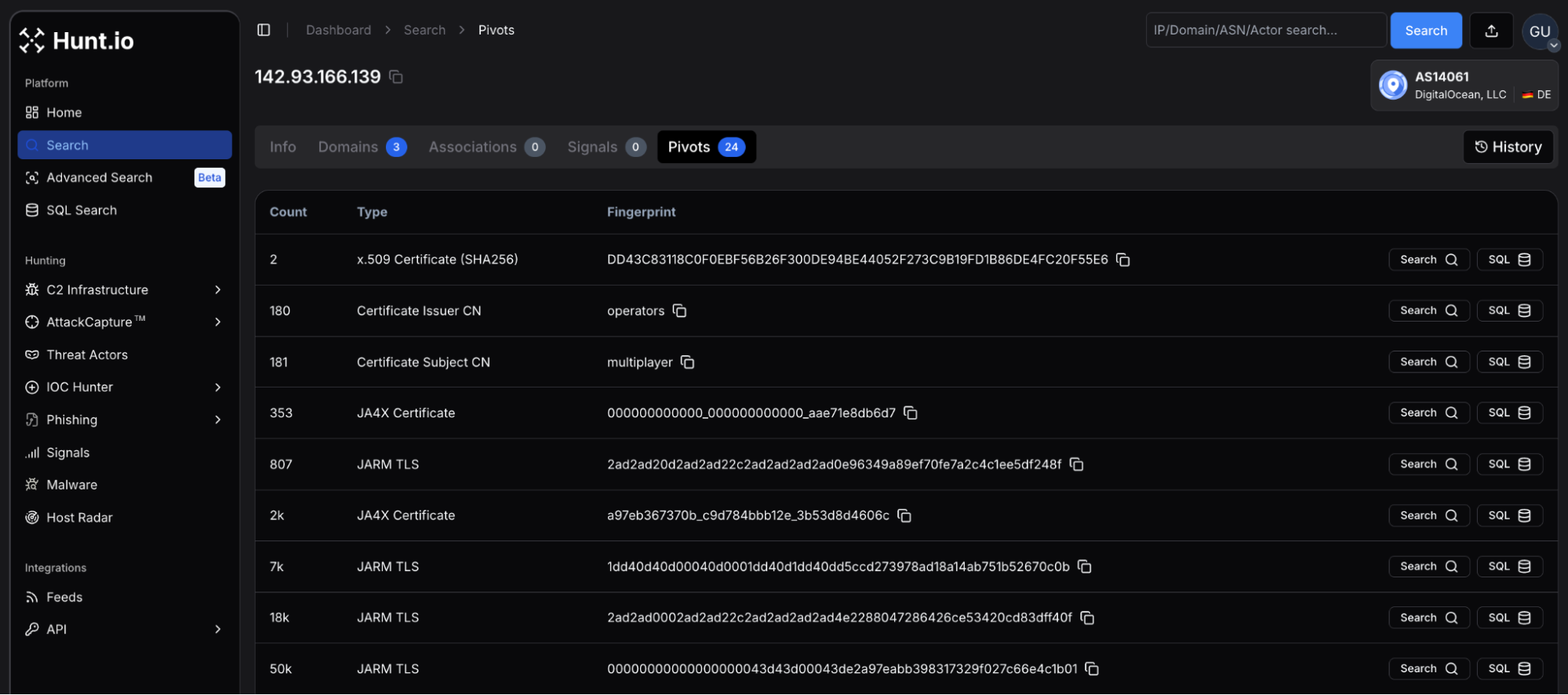

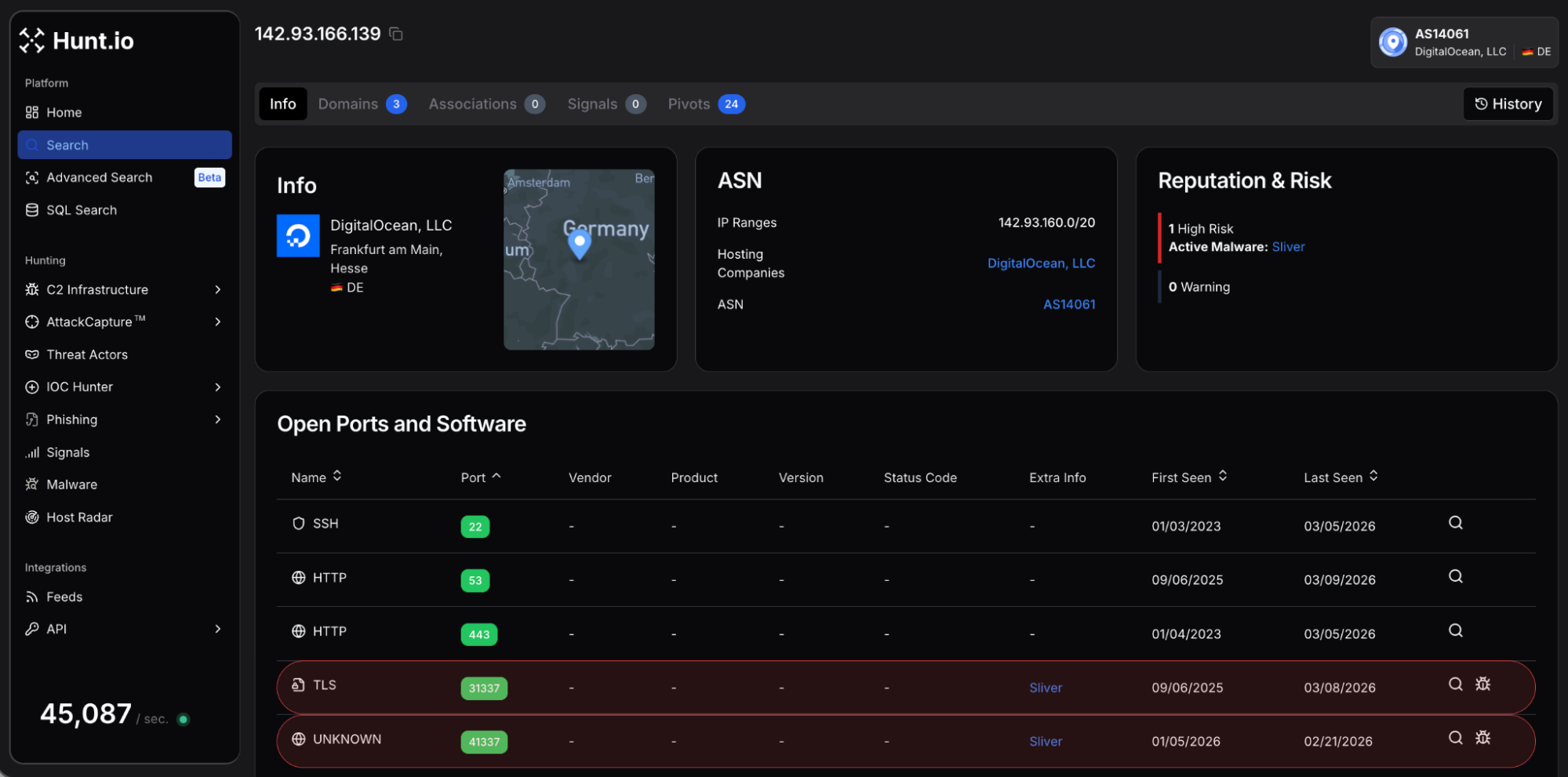

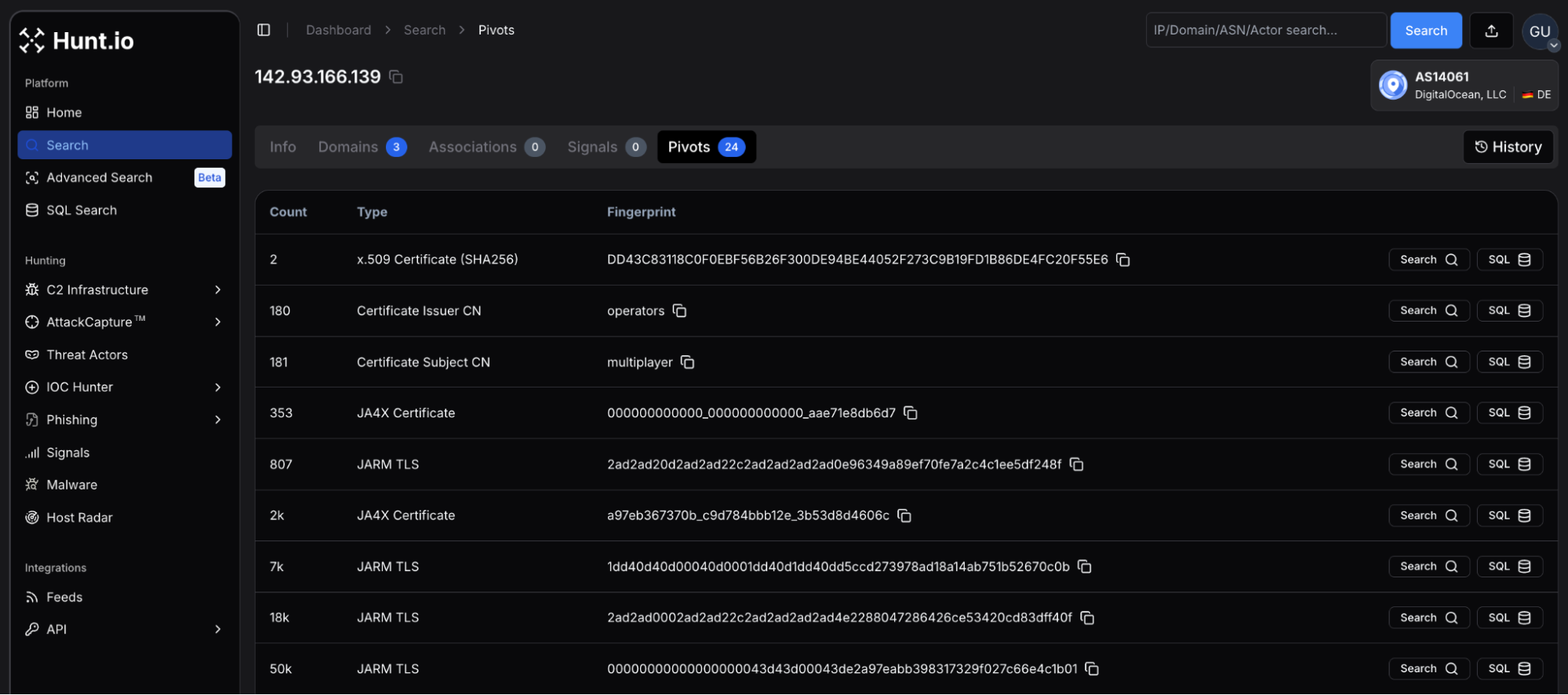

During execution, visibility matters. When investigating suspicious IPs or domains, hunters often need to pivot across certificates, JA4X fingerprints, hosting clusters, and historical infrastructure.

An advanced threat hunting platform like Hunt.io accelerates this step by surfacing open ports, TLS fingerprints such as JA4X and JARM, certificate reuse, hosted domains, and ASN relationships in one view. That makes it easier to expand a hypothesis beyond a single indicator and understand the broader infrastructure behind an intrusion.

Phase 3: Act (with Knowledge)

The Act phase is where you take the knowledge you've gained and turn it into something real. It's about preventing all that useful information from getting stuck in analyst notebooks.

What typically happens in the Act phase is:

Create or refine some detections:

SIEM rules for the specific malicious activity you found.

EDR detection rules for suspicious process behaviours.

Network signatures for command-and-control patterns.

Update your incident response playbooks:

Document any new procedures you've observed.

Add some investigation steps for similar incidents in the future.

Include any relevant IOCs and behavioural indicators.

Propose some hardening changes:

Restrict remote management paths.

Segment backup networks from the rest of the infrastructure.

Implement some additional monitoring on critical systems.

It's a good idea to document your hunts in a structured format:

| Element | Description |

|---|---|

| Objective | What the hunt aimed to discover |

| Scope | Systems, timeframe, and constraints |

| Hypotheses | Specific testable statements investigated |

| Data Sources | Logs and telemetry used |

| Queries | Key searches performed |

| Results | Findings, including negatives |

| Detection Artifacts | Rules or signatures created |

| Lessons Learned | Data gaps, technique effectiveness |

Share this knowledge widely:

With your SOC and IR teams during regular review meetings.

With the platform owners responsible for backups, identity and edge devices.

With leadership in a concise way, like "3 new detection rules deployed" or "2 misconfigurations fixed."

When infrastructure patterns repeat across campaigns, enrichment platforms like Hunt can help security teams transform those patterns into reusable logic rather than isolated indicators.

The Act phase is what feeds into the next Prepare phase: capturing lessons learned so that you can do better next time.

When this cycle repeats over time, it begins to shape the maturity of the entire hunting program. What starts as isolated hunts gradually turns into a structured capability that improves with each iteration.

PEAK Threat Hunting Lifecycle and Program Maturity

Individual hunts are important, but the real value comes from running PEAK as a continuous lifecycle, measuring maturity over months and years. Each Prepare → Execute → Act cycle feeds metrics and lessons into the next iteration.

Over time, an organization builds up a collection of hunts, detections, and playbooks that are all in line with the current state of adversary tradecraft. Rather than being a whole bunch of one-off activities, this is actually a sign of continuous improvement.

Maturity levels move through distinct phases:

Initial Stage

Hunts are pretty ad-hoc, only kicked off when something big has gone wrong.

Not much in the way of documentation.

Rarely do the findings from a hunt actually get used to create any new detections.

There aren't really any consistent metrics in place.

Developing Maturity

Hunts are now scheduled regularly, focusing on some of the areas we know are at highest risk (like identity, backups, and cloud control planes).

We're starting to keep track of the things we've found and the detections we've created.

There's some basic knowledge sharing going on between the hunters.

We're starting to design repeatable processes that help us stay consistent.

Mature Stage

Hunts are now programmed in line with threat intelligence.

We're automatically turning validated patterns into automated defences.

We're regularly reviewing our detection coverage to see if we're missing anything that we should be looking for.

Our metrics are driving our resource allocation decisions.

Optimised Stage

Our hunting is now fully integrated with detection engineering, CTI, red teaming and architecture review.

Our metrics are driving our budget and design decisions all the time.

We've got a proactive strategy in place that is guiding our security investments.

Our hunts are continually improving our overall security posture.

PEAK provides the scaffolding that lets us move between these stages without needing a specific vendor stack. We can start with just the basics and gradually bring in more telemetry and analytics as our programme matures.

To make this progression more concrete, it helps to walk through an example. The following scenario shows how a PEAK hunt might look when applied to a real-world security problem.

PEAK in Practice: An Example Hunt on Critical Infrastructure

This is a scenario that shows PEAK in practice: hunting for adversaries that are after our backup infrastructure and VPN gateways, something that's been going on for years and is documented in the security advisories from 2023 and 2024.

Prepare Phase

The hunt goal is to identify if any backup management interfaces or VPN gateways have been used as a way in for the bad guys to move around our network over the last 45 days

We're looking at a bunch of different data sources:

The logs from our VPN appliance.

The logs from the management plane of our network devices.

The authentication logs from our backup servers.

And some network flow data for the management subnets.

The hypothesis is all about threat actors who get in by compromising edge devices and then use them for persistent access, something we've seen a lot of in recent high-profile campaigns that targeted all sorts of different organizations across different sectors.

Execute Phase

We start by doing some baseline analysis. We establish what normal admin access to the management interfaces, things like connections from specific jump hosts and IP addresses from the admin teams, during working hours. This gives us the context we need to spot any anomalies.

Then we do a hypothesis-driven investigation. We search for deviations from what we've seen before:

SSH or HTTPS management connections from IP addresses we don't know.

Backup servers that initiate outbound connections to Internet hosts.

Configuration changes to edge devices outside maintenance windows.

Authentication attempts using service accounts outside normal hours.

As we investigate, we start to see some high-level findings emerge. We identify a small number of sessions from an unusual IP range that are connecting to our VPN appliances during times when we wouldn't normally see them.

We correlate this with some logs from the appliances and find that some suspicious commands and configuration changes were going on during those sessions, things that don't look like they match with any legitimate admin tasks.

Act on What We Know

The team takes the findings from the hunt and turns them into lasting improvements:

New detection rules

Alert on management access from IP addresses that don't normally access the management interfaces.

Flag authentication by service accounts at strange times of day.

Detect configuration changes on network devices without any corresponding change tickets.

Hardening changes

We force all admin tasks to go through a monitored jump host.

We segment the network so that management plane access is restricted.

We enable detailed logging on all the management interfaces for the edge devices.

Updated playbooks

We document the investigation procedure for suspected edge-device compromise.

We include specific log sources and queries that the team can use to do a rapid triage.

We define the escalation paths and containment actions that should be taken.

This single PEAK-structured hunt uncovered some risky behaviour and permanently raised the level of our defensive baseline against similar attacks. It also generated some detection outputs that will automatically fire if the bad guys try to use similar techniques in the future.

Conclusion

Threat hunting delivers the most value when it becomes a structured, repeatable practice rather than an occasional investigation. The PEAK framework helps teams organize hunts, capture knowledge, and turn findings into lasting defensive improvements.

At Hunt, we help threat hunters move faster by exposing infrastructure relationships, TLS fingerprints, certificates, hosted domains, and historical activity that make it easier to expand investigations and uncover hidden attacker infrastructure.

Book a demo now to see how we can support PEAK-style investigations in your environment.

Threat hunting has evolved far beyond ad-hoc log digging and reactive incident response. As attackers adopt stealthier techniques, security teams need a structured way to search for what automated tools might miss.

The pressure is real: the 2026 CrowdStrike Global Threat Report highlights a 42% increase in zero-day vulnerabilities exploited before public disclosure, while Statista projects the global cost of cybercrime will reach $13.82 trillion by 2028.

That's where the PEAK Threat Hunting Framework comes in. It offers a practical, repeatable approach that turns hunting into a measurable, ongoing program rather than a series of one-off efforts. Designed to work across SIEM, EDR, and data lake environments, PEAK brings structure to proactive defence. In this article, we explore how it works and how to apply it in real-world scenarios.

Introducing the PEAK Threat Hunting Framework

The harsh reality for security teams is that signature-based defences simply can't keep up with the slow-moving, highly adaptable adversaries who've found ways to evade traditional detection for weeks or even months.

The PEAK threat hunting framework, a.k.a. "Prepare, Execute, Act with Knowledge," has emerged as a modern, vendor-agnostic approach that aims to make threat hunting repeatable, measurable, and in sync with the complex attack patterns seen in recent years.

PEAK is specifically designed for the resource-strapped security operations center teams and threat intelligence analysts who're drowning in alert fatigue while simultaneously dealing with the stealthy threats from APT groups, ransomware operators, and supply-chain attackers.

These adversaries have managed to bypass automated defences, making a proactive approach essential. Rather than waiting for alerts that might never happen, threat hunters using PEAK actively go out and search for unknown intrusions using a structured and documented threat hunting process.

Now, there's a big difference between reactive incident response and proactive hunting, and it's a fundamental one. Incident response teams leap into action after something's been detected, but what happens when the intrusion never triggers an alert? That's where the PEAK framework comes in, offering a practical, step-by-step structure rather than just abstract theory.

It brings together three different types of threat hunting: hypothesis-driven, baseline, and model-assisted, into a single lifecycle that works across SIEMs, data lakes, and EDR/XDR platforms.

Now, before diving deeper into PEAK itself, it helps to step back and understand where threat hunting frameworks fit in the first place. Why do organizations need them, and what problems are they actually solving for security teams?

The Importance of Threat Hunting Frameworks

A threat hunting framework is a documented, repeatable process for planning, running and operationalizing hunts across an organization. Without one, hunting expeditions tend to be hero-driven activities that rely on individual expertise and rarely produce lasting value. With a framework, organizations turn one-off investigations into a strategic program with consistent documentation, actionable metrics, and clear hand-offs to SOC and IR teams.

Frameworks take scattered efforts and turn them into measurable outcomes. Instead of asking "did we find anything?" leadership can track metrics like dwell time reduction, new detections created per hunt, and misconfigurations remedied. Findings get fed into detection engineering pipelines rather than getting lost in analyst notebooks.

The evolution of hunting programs reflects changing threat landscapes. Early frameworks from a few years back established the hypothesis loop concept, but today's organizations are facing challenges that those approaches didn't anticipate: cloud infrastructure, SaaS applications, remote work environments, and living-off-the-land techniques. Modern threat actors are abusing legit tools, persisting on edge devices, and exploiting identity systems in ways that demand updated hunting approaches.

If no framework exists, you can pretty much bet on the following pain points:

Hunts aren't recorded, so when analysts leave, all that knowledge just disappears.

Different hunters are doing the same work without even realizing it.

Findings never turn into automated detections, so you're stuck doing the same investigation over and over again.

Executives view hunting as "a nice to have" rather than a strategic control.

PEAK is designed to close those gaps: it's recent, it's iterative, and specifically focused on closing the loop between hunting, detection engineering, and resilience improvement.

So what does that actually look like in practice? The easiest way to understand PEAK is to look at its core structure and how the framework organizes a hunt from start to finish.

The PEAK Framework at a Glance: Prepare, Execute, Act with Knowledge

The PEAK framework is a continuous loop with three main phases flowing into each other. "Each PEAK hunt follows a three-stage process: Prepare, Execute, and Act" as stated by its creator, Splunk.

Prepare leads into Execute, which leads to Act with Knowledge, which in turn feeds back into the next Prepare cycle. Knowledge runs throughout the whole thing, informing every step rather than existing as a separate component.

Each phase has a distinct purpose:

Prepare: Figure out what to hunt, choose your data sources, and scope the activity so you're spending time on the risks that matter rather than just random exploration

Execute: Analyze data, test your hypotheses and identify malicious or risky behaviour through a structured investigation

Act with Knowledge: Take your findings and turn them into detections, playbooks and improved controls that outlast the individual hunt

Knowledge integration sets PEAK apart from simpler approaches. This knowledge comes from threat intelligence, internal incident history, red-team exercises and lessons learned from previous hunts. Every iteration builds on what you've learned rather than starting from scratch.

Flexibility is at the heart of the PEAK design. Threat hunters can iterate within the Execute phase, narrow or expand scope mid-hunt and pivot to new hypotheses without having to start the whole framework over again. That reflects the reality that investigations don't usually go in straight lines: leads pop up, assumptions get proven wrong, and a fresh perspective often reveals some unexpected patterns.

PEAK was built to support three complementary hunt types: hypothesis-driven, baseline and model-assisted, so security teams can start simple and grow into more advanced threat hunting.

Organizations don't need to have machine learning capabilities from day one: they can start with hypothesis-driven hunts and mature into model-assisted hunts as their programs develop. Reviewing the Threat Hunting Maturity Model is a helpful way to understand that progression.

Within that lifecycle, PEAK supports several different ways of approaching a hunt. Each method fits different levels of data availability, tooling, and team maturity.

Core PEAK Hunt Types: Hypothesis, Baseline, and Model-Assisted

Instead of telling you how to hunt, PEAK sets out three hunt patterns that you can use, mix, and match, depending on what resources you've got available, how much data you're working with, and what specific objectives you're trying to achieve.

| Hunt Type | Best For | Key Requirement |

|---|---|---|

| Hypothesis-Driven | Investigating specific threat intelligence | Clear, testable statement about adversary behaviour |

| Baseline | Understanding normal activity and surfacing anomalies | Historical data to establish patterns |

| Model-Assisted (M-ATH) | Scaling analysis with automation | Machine learning capabilities or UEBA |

All three hunt types go through the same PEAK phases: Prepare, Execute, Act with Knowledge, so even though the methods vary, the program stays consistent. This means organizations can track hunts uniformly, no matter what approach they're using.

Hypothesis-Driven Hunts

Hypothesis-driven hunts are the classic approach to threat hunting.

You start with a specific, testable statement about what the enemy is up to, such as: "If an attacker has compromised an MFA-fatigued account, then they will use OAuth token grants in Microsoft 365 within the last 14 days."

During the Prepare phase, threat hunters:

Turn threat intelligence or incident reports into precise hypotheses.

Pick the right data sources (like identity provider logs, cloud audit logs, and VPN logs).

Scope the timeframe and environment segments (focusing on high-value admin accounts, for example).

The Execute phase uses structured queries, pivoting, and correlation to support or refute the hypothesis.

A practical example might involve searching for unusual OAuth consent grants or high-risk sign-ins in Azure AD, then checking to see if any of those events match up with impossible travel indicators or atypical user agents.

In the Act phase, you take validated hypotheses and turn them into:

New SIEM detection rules for abnormal consent grants.

Updated IAM policies and just-in-time access rules.

Playbooks that guide analysts when they spot similar patterns again.

This approach is best suited to teams that already have some experience and have threat intelligence feeds and incident post-mortems already up and running

It gives a focus and a rigour that means hunting time is spent on known enemy behaviours, rather than just random exploration.

Baseline Hunts

Baseline hunts try to establish what normal activity looks like for a particular behaviour, and then see where the outliers are.

Think of it this way: what's typical admin access to our domain controllers look like over 90 days, and what falls outside that?

Preparation for baseline hunts involves:

Choosing the behaviour and scope (all privileged sign-ins to domain controllers for a specific quarter).

Picking broad telemetry sources (like authentication logs, network flow records, or Zeek conn.log).

Deciding on statistical or heuristic markers of normal (like typical source IP ranges, business hours, usual protocols).

During execution, you aggregate counts and distributions and look for the top-talkers, usual ports, and standard geographic locations.

Any deviations become investigation leads: new services appearing suddenly, rare destination addresses, or off-hours activity spikes that break established patterns.

The Act phase turns baseline findings into:

Anomaly-based detections (like "new admin workstation connecting to DC after midnight").

Updated access control lists, firewall rules or jump-box policies.

Seeds for future hypothesis-driven hunts to dig deeper into suspicious deviations.

A concrete example: if you establish the baseline of SSH traffic from network devices, and find that routers only ever connect to internal management systems, but then baseline analysis shows an SSH session to an external IP, that's a deviation that needs investigating and might become a permanent detection rule.

Model-Assisted Threat Hunts (M-ATH)

Model-Assisted Threat Hunts use machine learning or analytics to pre-surface patterns, but human analysts are still essential for interpretation and validation.

This approach combines the structured investigation of hypothesis-driven hunting with the anomaly detection of baseline hunting, with a dash of ML automation on top.

Models can run the gamut from simple unsupervised clustering on authentication patterns to sophisticated supervised models trained on labelled attack data.

They might run in SIEM UEBA modules, data lake notebooks, or dedicated analytics platforms

Preparation for M-ATH requires:

Ensuring the right datasets (like process telemetry, DNS logs and proxy logs) are being consistently ingested and retained.

Picking which models or UEBA risk scores will guide the hunt.

Defining acceptable false-positive rates and triage workload guardrails.

Execution involves reviewing the highest-risk entities flagged by the models, then pivoting into raw logs to validate whether any anomalies match up with known TTPs.

A high-risk user score might indicate lateral movement, credential theft or data exfiltration, but only human analysis can confirm what the model has surfaced.

The Act phase for M-ATH might include:

Feeding confirmed malicious examples back into training datasets.

Converting repetitive high-confidence model outputs into deterministic detection rules.

Documenting model limitations so hunters understand where human judgment needs to step in.

M-ATH is the most technologically sophisticated of the PEAK hunt types, suitable for organizations with the data and analytics expertise to make it work.

Understanding these hunt types is useful, but the real value comes from seeing how they move through the PEAK lifecycle itself. The framework becomes much clearer once you walk through how a hunt actually unfolds across the three phases.

Operationalizing the PEAK Framework Phases

Now let's see how to make each PEAK phase a reality within existing tools, whether that's a SIEM, EDR, NDR or SOAR platform. A real-world scenario about hunting for adversaries targeting backup infrastructure runs through all three phases, something that's been seen in recent security advisories.

When you operationalize each PEAK phase, you'll produce specific artifacts and involve defined roles. The framework gives you structure, without telling you which tech to use, letting teams implement PEAK regardless of their existing vendor stack.

Phase 1: Prepare

The Prepare phase gets everyone on the same page and makes sure you're tackling impactful risks, not just wading through log data for kicks. Time spent here will make the execution phase much more effective.

Here's what you need to do to prepare:

Define the hunt goal based on a real threat:

"Catch activity that's consistent with an actor deploying ransomware to backup servers."

"Reveal edge-device persistence similar to campaigns we've seen in recent government advisories."

Translate threat intel into something concrete and testable:

Use a pattern like Actor--Behaviour--Location--Evidence.

Example: "A ransomware operator (actor) disabling backup services and deleting shadow copies (behaviour) on Windows backup servers (location) within the last 30 days (evidence scope)"

Work out which data sources you need:

EDR telemetry from backup servers.

Storage appliance logs.

VPN logs for admin access.

Network telemetry at the data centre edge.

Make some scoping decisions:

When to stop looking (previous 30 or 60 days).

Which systems to investigate (production backup clusters and their management interfaces).

What constraints do you need to work around (performance impact, query cost in cloud SIEM).

The Prepare phase spits out a few super useful artifacts:

A short cheat sheet of what to do and when.

A list of permissions and access requirements.

Some pre-built queries or dashboards to speed up execution.

Don't skip this phase: it's what makes the difference between a successful hunt and a waste of time. People who skip this phase often end up wandering through log data without any clear idea of what they're doing.

Phase 2: Execute

The Execute phase is where you run the plan you made in the Prepare phase, and start drilling down into the interesting bits. This is where you run queries, look at anomalies, and decide whether to widen or narrow the search.

Typically, you start with broad queries to validate your assumptions: understanding normal backup admin logons and network paths for replication traffic. This helps you get the context before you drill down into the interesting stuff.

Once you have a lead, you start looking at deviations from the norm, like backup servers initiating RDP or SMB sessions to unusual hosts.

Investigative techniques include:

Visualizing logon failures and successes in a time-series chart.

Pivoting from one log source to another using common identifiers like hostnames or user IDs.

Labelling events as benign, suspicious, or confirmed malicious so you can see how you got there.

The Execute phase is iterative; you might start with one hypothesis, but as you dig deeper, you find new evidence, and your theory might change. And that's okay, even if you don't find anything malicious, confirming that your security controls are working is valuable too.

What you should get out of the Execute phase is:

Confirmed or disproved hypotheses with some actual evidence.

Some interesting patterns that you might want to follow up on next time.

A nice tidy pack of evidence (queries, screenshots, log snippets) ready for the Act phase to turn into something useful.

During execution, visibility matters. When investigating suspicious IPs or domains, hunters often need to pivot across certificates, JA4X fingerprints, hosting clusters, and historical infrastructure.

An advanced threat hunting platform like Hunt.io accelerates this step by surfacing open ports, TLS fingerprints such as JA4X and JARM, certificate reuse, hosted domains, and ASN relationships in one view. That makes it easier to expand a hypothesis beyond a single indicator and understand the broader infrastructure behind an intrusion.

Phase 3: Act (with Knowledge)

The Act phase is where you take the knowledge you've gained and turn it into something real. It's about preventing all that useful information from getting stuck in analyst notebooks.

What typically happens in the Act phase is:

Create or refine some detections:

SIEM rules for the specific malicious activity you found.

EDR detection rules for suspicious process behaviours.

Network signatures for command-and-control patterns.

Update your incident response playbooks:

Document any new procedures you've observed.

Add some investigation steps for similar incidents in the future.

Include any relevant IOCs and behavioural indicators.

Propose some hardening changes:

Restrict remote management paths.

Segment backup networks from the rest of the infrastructure.

Implement some additional monitoring on critical systems.

It's a good idea to document your hunts in a structured format:

| Element | Description |

|---|---|

| Objective | What the hunt aimed to discover |

| Scope | Systems, timeframe, and constraints |

| Hypotheses | Specific testable statements investigated |

| Data Sources | Logs and telemetry used |

| Queries | Key searches performed |

| Results | Findings, including negatives |

| Detection Artifacts | Rules or signatures created |

| Lessons Learned | Data gaps, technique effectiveness |

Share this knowledge widely:

With your SOC and IR teams during regular review meetings.

With the platform owners responsible for backups, identity and edge devices.

With leadership in a concise way, like "3 new detection rules deployed" or "2 misconfigurations fixed."

When infrastructure patterns repeat across campaigns, enrichment platforms like Hunt can help security teams transform those patterns into reusable logic rather than isolated indicators.

The Act phase is what feeds into the next Prepare phase: capturing lessons learned so that you can do better next time.

When this cycle repeats over time, it begins to shape the maturity of the entire hunting program. What starts as isolated hunts gradually turns into a structured capability that improves with each iteration.

PEAK Threat Hunting Lifecycle and Program Maturity

Individual hunts are important, but the real value comes from running PEAK as a continuous lifecycle, measuring maturity over months and years. Each Prepare → Execute → Act cycle feeds metrics and lessons into the next iteration.

Over time, an organization builds up a collection of hunts, detections, and playbooks that are all in line with the current state of adversary tradecraft. Rather than being a whole bunch of one-off activities, this is actually a sign of continuous improvement.

Maturity levels move through distinct phases:

Initial Stage

Hunts are pretty ad-hoc, only kicked off when something big has gone wrong.

Not much in the way of documentation.

Rarely do the findings from a hunt actually get used to create any new detections.

There aren't really any consistent metrics in place.

Developing Maturity

Hunts are now scheduled regularly, focusing on some of the areas we know are at highest risk (like identity, backups, and cloud control planes).

We're starting to keep track of the things we've found and the detections we've created.

There's some basic knowledge sharing going on between the hunters.

We're starting to design repeatable processes that help us stay consistent.

Mature Stage

Hunts are now programmed in line with threat intelligence.

We're automatically turning validated patterns into automated defences.

We're regularly reviewing our detection coverage to see if we're missing anything that we should be looking for.

Our metrics are driving our resource allocation decisions.

Optimised Stage

Our hunting is now fully integrated with detection engineering, CTI, red teaming and architecture review.

Our metrics are driving our budget and design decisions all the time.

We've got a proactive strategy in place that is guiding our security investments.

Our hunts are continually improving our overall security posture.

PEAK provides the scaffolding that lets us move between these stages without needing a specific vendor stack. We can start with just the basics and gradually bring in more telemetry and analytics as our programme matures.

To make this progression more concrete, it helps to walk through an example. The following scenario shows how a PEAK hunt might look when applied to a real-world security problem.

PEAK in Practice: An Example Hunt on Critical Infrastructure

This is a scenario that shows PEAK in practice: hunting for adversaries that are after our backup infrastructure and VPN gateways, something that's been going on for years and is documented in the security advisories from 2023 and 2024.

Prepare Phase

The hunt goal is to identify if any backup management interfaces or VPN gateways have been used as a way in for the bad guys to move around our network over the last 45 days

We're looking at a bunch of different data sources:

The logs from our VPN appliance.

The logs from the management plane of our network devices.

The authentication logs from our backup servers.

And some network flow data for the management subnets.

The hypothesis is all about threat actors who get in by compromising edge devices and then use them for persistent access, something we've seen a lot of in recent high-profile campaigns that targeted all sorts of different organizations across different sectors.

Execute Phase

We start by doing some baseline analysis. We establish what normal admin access to the management interfaces, things like connections from specific jump hosts and IP addresses from the admin teams, during working hours. This gives us the context we need to spot any anomalies.

Then we do a hypothesis-driven investigation. We search for deviations from what we've seen before:

SSH or HTTPS management connections from IP addresses we don't know.

Backup servers that initiate outbound connections to Internet hosts.

Configuration changes to edge devices outside maintenance windows.

Authentication attempts using service accounts outside normal hours.

As we investigate, we start to see some high-level findings emerge. We identify a small number of sessions from an unusual IP range that are connecting to our VPN appliances during times when we wouldn't normally see them.

We correlate this with some logs from the appliances and find that some suspicious commands and configuration changes were going on during those sessions, things that don't look like they match with any legitimate admin tasks.

Act on What We Know

The team takes the findings from the hunt and turns them into lasting improvements:

New detection rules

Alert on management access from IP addresses that don't normally access the management interfaces.

Flag authentication by service accounts at strange times of day.

Detect configuration changes on network devices without any corresponding change tickets.

Hardening changes

We force all admin tasks to go through a monitored jump host.

We segment the network so that management plane access is restricted.

We enable detailed logging on all the management interfaces for the edge devices.

Updated playbooks

We document the investigation procedure for suspected edge-device compromise.

We include specific log sources and queries that the team can use to do a rapid triage.

We define the escalation paths and containment actions that should be taken.

This single PEAK-structured hunt uncovered some risky behaviour and permanently raised the level of our defensive baseline against similar attacks. It also generated some detection outputs that will automatically fire if the bad guys try to use similar techniques in the future.

Conclusion

Threat hunting delivers the most value when it becomes a structured, repeatable practice rather than an occasional investigation. The PEAK framework helps teams organize hunts, capture knowledge, and turn findings into lasting defensive improvements.

At Hunt, we help threat hunters move faster by exposing infrastructure relationships, TLS fingerprints, certificates, hosted domains, and historical activity that make it easier to expand investigations and uncover hidden attacker infrastructure.

Book a demo now to see how we can support PEAK-style investigations in your environment.

Hunt adversary infrastructure in real time. Surface C2 servers, enrich IOCs,

and map attacker activity at scale with our unified threat hunting platform.

Hunt adversary infrastructure in real time. Surface C2 servers, enrich IOCs,

and map attacker activity at scale with our unified threat hunting platform.

Hunt adversary infrastructure in real time. Surface C2 servers, enrich IOCs,

and map attacker activity at scale with our unified threat hunting platform.